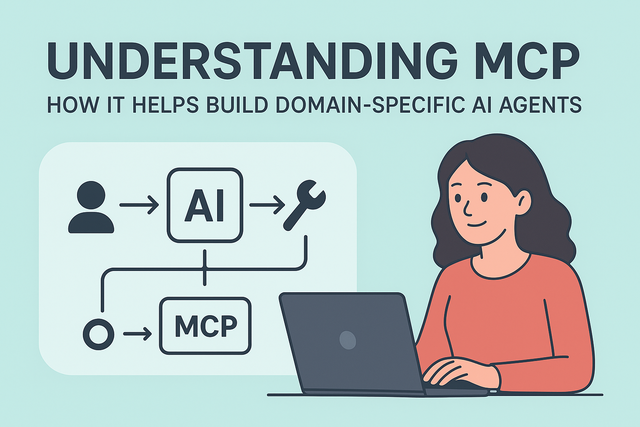

Large Language Models (LLMs) like GPT, Claude, or LLaMA are great at conversation, reasoning, and problem-solving. But on their own, they are generalists — they don’t have direct access to your company’s databases, industry-specific tools, or real-time information.

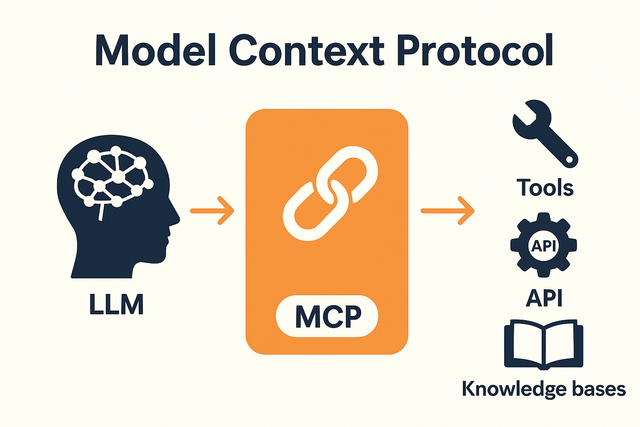

This is where the Model Context Protocol (MCP) comes in. MCP acts as a bridge that connects LLMs to the outside world — to tools, APIs, and knowledge bases. With MCP, you can turn a general LLM into a domain-specific AI agent that understands and operates within your unique environment.

The Model Context Protocol was introduced by Anthropic in November 2024 to standardize how LLMs connect with external systems. This innovation enables developers to create safe, reliable, and specialized AI agents. You can read the full details in the official Anthropic MCP announcement.

Think of MCP as a universal connector between an LLM and the resources it needs. Instead of writing custom integrations for every tool, MCP provides a standard way for:

Let’s break it down in simple steps:

► Tools Register Their Capabilities

Every tool or data source connected through MCP describes what it can do in a clear, machine-readable format.

Example:

{

"name": "get_customer_profile",

"description": "Fetches customer details from CRM",

"parameters": {

"type": "object",

"properties": {

"customer_id": { "type": "string" }

},

"required": ["customer_id"]

}

}

This tells the AI: “I can get customer profiles if you give me a customer ID.”

► The AI Discovers What’s Available

When the AI agent starts up, it asks MCP: “What tools can I use?”

Now the AI knows its toolbox.

► The AI Decides When to Use a Tool

When you give the AI a task, it decides whether to call one of these tools.

Example request:

{

"action": "get_customer_profile",

"parameters": { "customer_id": "C-00123" }

}

MCP then passes this request to the right system, collects the response, and brings it back to the AI.

► The AI Uses the Results in Its Reasoning

The tool’s response gets added to the AI’s context, so it can use that data in its answer.

Example response from the CRM:

{

"name": "get_customer_profile",

"result": {

"customer_id": "C-00123",

"name": "Alice Johnson",

"status": "Premium",

"last_purchase": "2025-09-10"

}

}

Now the AI can say:

“Alice Johnson is a Premium customer. Her last purchase was on September 10, 2025.”

No guessing. No hallucination. Just grounded facts.

MCP transforms LLMs from general-purpose conversationalists into specialized AI agents that can safely and reliably work in your domain.

By standardizing how models discover, call, and use tools, MCP makes it easier to build AI systems that are:

In short: if you want an AI agent that doesn’t just talk but can actually do things with your systems and knowledge, MCP is the missing link.